Tech

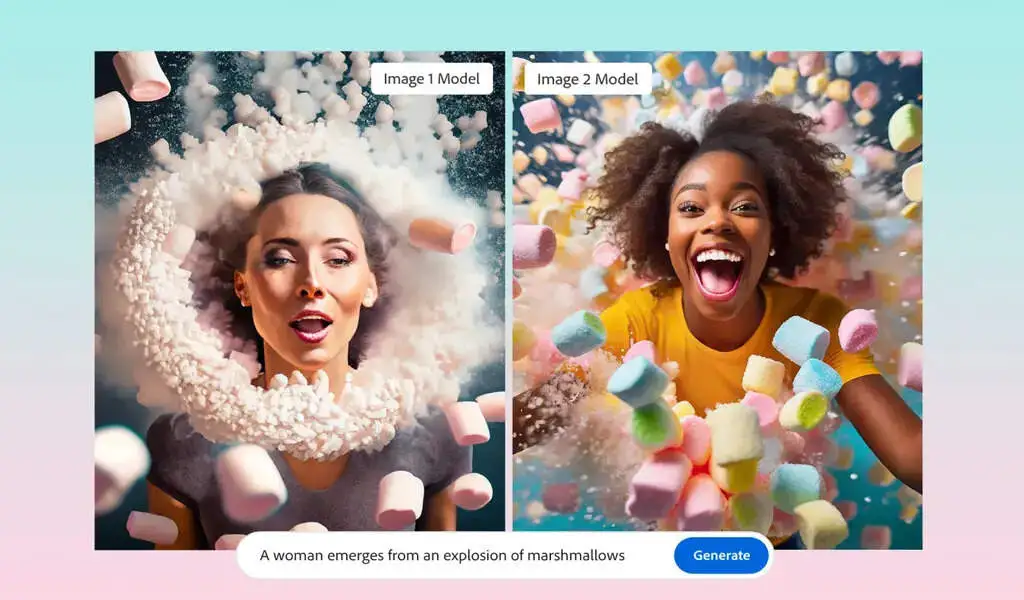

Image Generation In Adobe Firefly Is Now More Realistic

(CTN News) – During MAX, Adobe’s annual conference for creative professionals, the company announced that it has updated the models that power Firefly, its generative AI image creation service.

Firefly Image 2 Model, as it is officially known, promises to render humans more accurately, including their facial features, skin, hair, bodies, and hands (which have been problematic for some time).

In addition, Adobe announced today that Firefly’s users have generated three billion images since the service launched about half a year ago, with one billion images generated just last month.

The vast majority of Firefly users (90%) are also new to Adobe’s products.

The majority of these users probably use the Firefly web application, which helps explain why, a few weeks ago, the company decided to make Firefly a full-fledged component of the Creative Cloud.

The new model was not only trained on more recent images from Adobe Stock and other commercially safe sources, but was also significantly larger, according to Alexandru Costin, Adobe’s VP for generative AI and Sensei.

The Firefly model is composed of multiple models, and the size of each model has been increased by a factor of three, according to him.

The company also increased the dataset by almost a factor of two, which should improve the model’s understanding of what users are asking for.

This brain is three times larger and will know how to make connections and render more beautiful pixels, more beautiful details for the user.

There is no doubt that the larger model is more resource-intensive, but Costin stated that it should run at the same speed as the first model.

The distillation, pruning, optimization, and quantization of data will continue to be explored and invested in.

Efforts are being made to ensure customers receive a similar experience, while avoiding ballooning cloud costs.” Currently, Adobe’s focus is on quality rather than optimization.

For now, the new model will be available through the Firefly web app, but it will be added to Creative Cloud apps such as Photoshop in the near future, where it will power popular features such as generative fills. In addition, Costin stressed the importance of this.

In Adobe’s view, generative artificial intelligence is not so much about content creation as it is about generative editing.

The main reason Photoshop generative fill is so successful is that it does not just generate new assets, but also uses existing assets – a photo shoot, a product shoot – to enhance existing workflows.

We are calling our umbrella definition of generative editing more generative editing than just text-to-image, as we believe this is more important to our customers.”

In addition to this new model, Adobe is also introducing a few new controls in the Firefly web app that allow users to adjust the depth of field, motion blur, and field of view for their images.

There is also the option of uploading an existing image and having Firefly match the style of the image, as well as an auto-complete feature for writing your prompts (which Adobe claims will help you come up with a better image).

SEE ALSO:

In India, Spotify Restricts Its Free Tier To Get More Paying Customers