News Asia

Anti-Facial Recognition Hyper-Realistic 3D Masks to Go on Sale in Japan

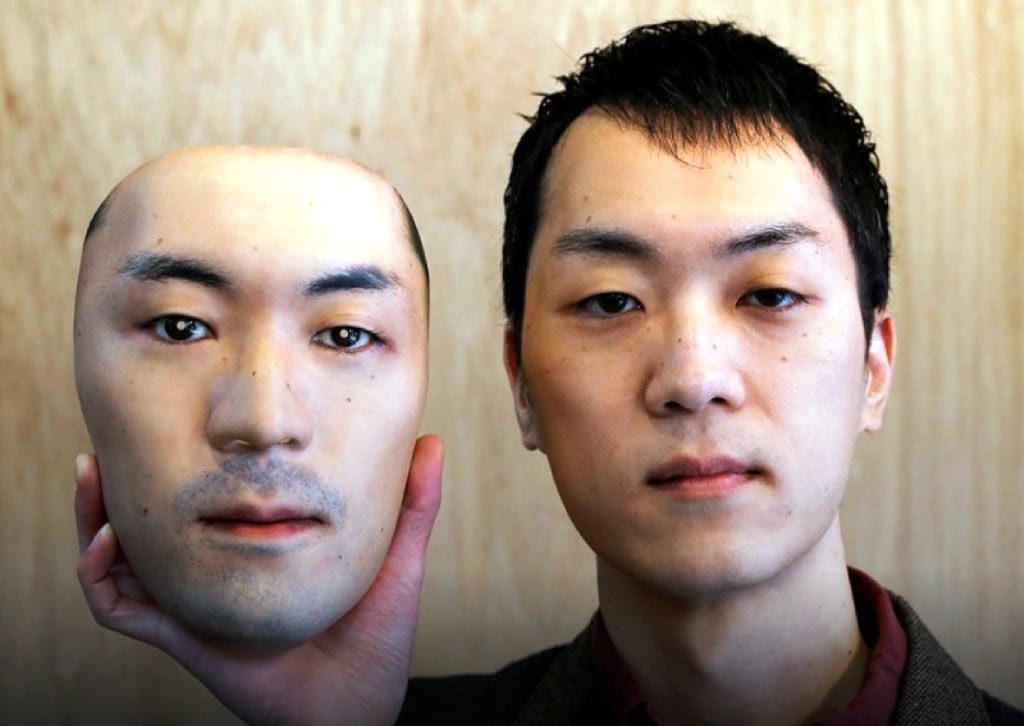

A year into the coronavirus epidemic, a Japanese retailer has come up with a new take on the theme of facial camouflage, anti-facial recognition hyper-realistic mask that models a stranger’s features in three dimensions.

Shuhei Okawara’s masks won’t protect you or others against the virus. But they will lend you the exact appearance of an unidentified Japanese adult whose features have been printed onto them.

“Mask shops in Venice probably do not buy or sell faces. But that is something that’s likely to happen in fantasy stories, Okawara told Reuters.

The masks will go on sale early next year for 98,000 yen (US$950) apiece at his Tokyo shop, Kamenya Omote, whose products are popular as accessories for parties and theatrical performance.

Okawara chose his model, whom he paid 40,000 yen (US$385), from more than 100 applicants who sent him their photos when he launched the project in October.

An artisan then reworked the winning image, created on a 3D printer. Initial inquiries suggest demand for the masks will be strong, Okawara said.

“As is often the case with the customers of my shop, there are not so many people who buy (face masks) for specific purposes. Most see them as art pieces,” Okawara said. He plans to gradually add new faces, including some from overseas, to the lineup.

Which of these faces is real?

The Japan Times reports, facial recognition programs are used by law enforcement to identify and arrest criminal suspects (and also by some activists to reveal the identities of police officers who cover their name tags in an attempt to remain anonymous).

These people may look familiar. They may look like users you’ve seen on Facebook, Twitter and also Tinder, or maybe people whose product reviews you’ve read on Amazon. Even more they look stunningly real at first glance, but they do not exist. They were born from the mind of a computer.

There are now businesses that sell fake people. On the website Generated.Photos, you can buy a “unique, worry-free” fake person for $2.99, or 1,000 people for $1,000. If you just need a couple of fake people, for characters in a video game or to make your company website appear more diverse, you can get their photos free on ThisPersonDoesNotExist.com. Adjust their likeness as needed. Make them old, young or the ethnicity of your choosing. If you want your fake person animated, a company called Rosebud.AI can do that and can even make them talk.

These simulated people are also starting to show up around the internet, used as masks by real people with nefarious intent: spies who don an attractive face in an effort to infiltrate the intelligence community; right-wing propagandists who hide behind fake profiles, photo and all; online harassers who troll their targets with a friendly visage.

Detection will only get harder over time

These creations became possible only in recent years thanks to a new type of artificial intelligence called a generative adversarial network, or GAN. In essence, you feed a computer program a heap of photos of real people. It studies them and tries to come up with its own photos of people, while another part of the system tries to detect which of those photos are of fake people. The back-and-forth makes the end product ever more indistinguishable from the real thing.

Given the pace of improvement, it’s easy to imagine a not-so-distant future in which we are confronted with not just single portraits of fake people but whole collections of them — at a party with fake friends, hanging out with their fake dogs, holding their fake babies. It will become increasingly difficult to tell who is real online and who is a figment of a computer’s imagination.

“When the tech first appeared in 2014, it was bad; it looked like the Sims,” said Camille Francois, a disinformation researcher whose job is to analyze the manipulation of social networks. “It’s a reminder of how quickly the technology can evolve. Detection will only get harder over time.”

Advances in facial fakery have been made possible in part because technology is now so much better at identifying key facial features. You can use your face to unlock your smartphone, or tell your photo software to sort through your thousands of pictures and show you only those of your child.