Tech

New York Times Blocks OpenAI’s GPTbot: Restricting Web Content Access And Opt-Out Solution

(CTN NEWS) – Recently, there were revelations that the New York Times is contemplating the possibility of initiating legal measures against OpenAI, the creator of ChatGPT.

The allegation is centered around OpenAI’s AI models purportedly incorporating materials from the New York Times’ website, content that falls under the purview of the newspaper’s intellectual property.

Although no legal action has been taken thus far, the prominent news outlet has opted to proactively prohibit OpenAI’s web crawler from accessing its website’s content.

Consequently, this restriction effectively prevents the utilization of the website’s materials for the training of any of OpenAI’s fundamental AI models.

OpenAI Web Content Access and Opt-Out Initiatives

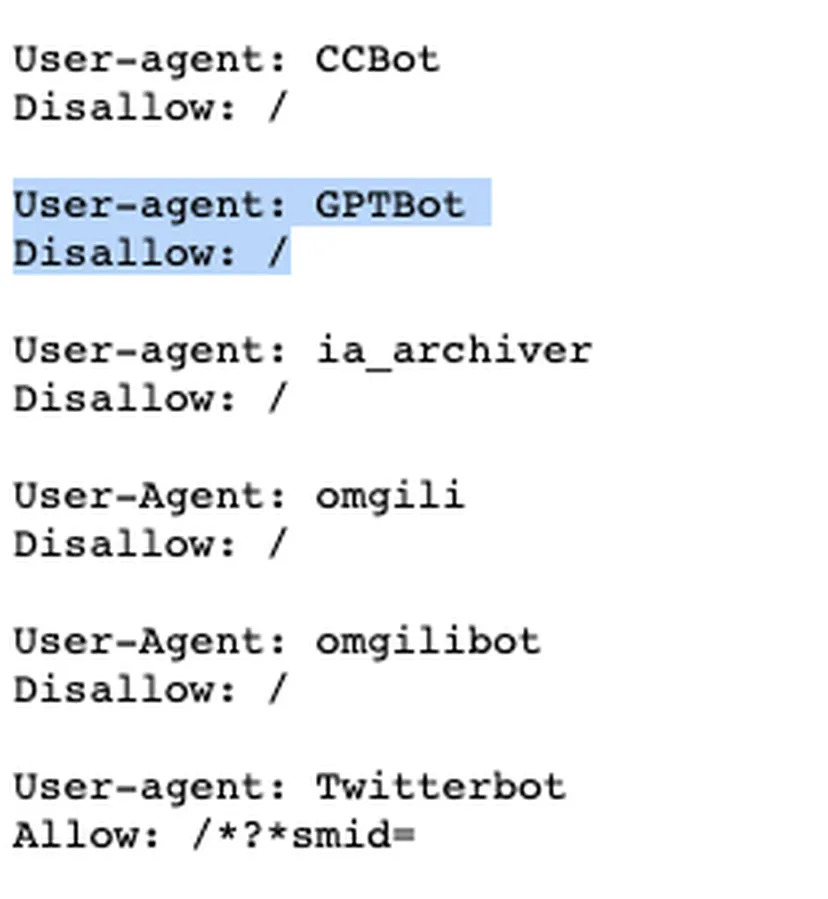

According to a recent article from The Verge, The New York Times (NYT) has taken steps to prevent OpenAI’s web crawler, known as GPTbot, from scanning and categorizing the content present on their website.

The report draws attention to a specific webpage on the publication’s site that explicitly confirms the bot’s restricted access.

Utilizing the Archive’s Wayback Machine, a tool enabling users to explore web content from previous dates, it has been revealed that the bot’s access was officially blocked on August 17th.

This action follows OpenAI’s implementation of an “opt-out” mechanism for website proprietors who do not wish their website content to be employed in training the company’s AI models.

On August 7th, OpenAI detailed that its GPTbot can be prevented from accessing content by making adjustments on the robot.txt page.

Concurrently, in its blog post, OpenAI underscored the utilization of such content, stating, “Web pages that are traversed by the GPTBot user agent might potentially contribute to enhancing future models.

These pages are carefully screened to eliminate sources demanding payment, collecting personally identifiable information (PII), or containing text that violates our guidelines.”

Web Crawlers in AI Training: Navigating Content Indexing and Social Media Responses

For those who might not be familiar, a web crawler, also referred to as a web spider, is essentially a computer program designed to autonomously explore and index the content present on websites.

It systematically navigates through all the URLs within a website, collecting data to compile its own informational database. In the current landscape, such web crawlers are extensively employed by AI enterprises to train their foundational models.

In recent times, several social media platforms have taken measures to address this trend.

Twitter, for instance, has implemented a temporary tweet rate limit aimed at preventing these web crawlers from extracting content from its platform. Similarly, Reddit has introduced a new API policy with the intent of discouraging the activities of web crawlers.

Nevertheless, OpenAI stands out as one of the rare AI companies providing a straightforward and uncomplicated means to exclude content from the reach of its GPTbot.

In a report by NPR published last week, it was disclosed that The New York Times (NYT) is considering the possibility of pursuing legal action against the creators of ChatGPT.

This development arises from the inability of both parties to come to terms on a licensing arrangement. The proposed agreement centered on OpenAI paying a predetermined sum for utilizing NYT’s articles to train their AI models.

RELATED CTN NEWS:

14-Hour Ordeal Ends: 8 Stranded Cable Car Passengers Rescued In Northwest Pakistan

BRICS Summit 2023: Developing Nations’ Leaders Address Expansion And Global Dynamics