Data is the bloodline, and so does data scraping!

In our increasingly data-driven world, the value of information cannot be underestimated. The abundance of online data has made it essential for businesses, researchers, and individuals to extract and analyze relevant information. This is where data scraping comes in.

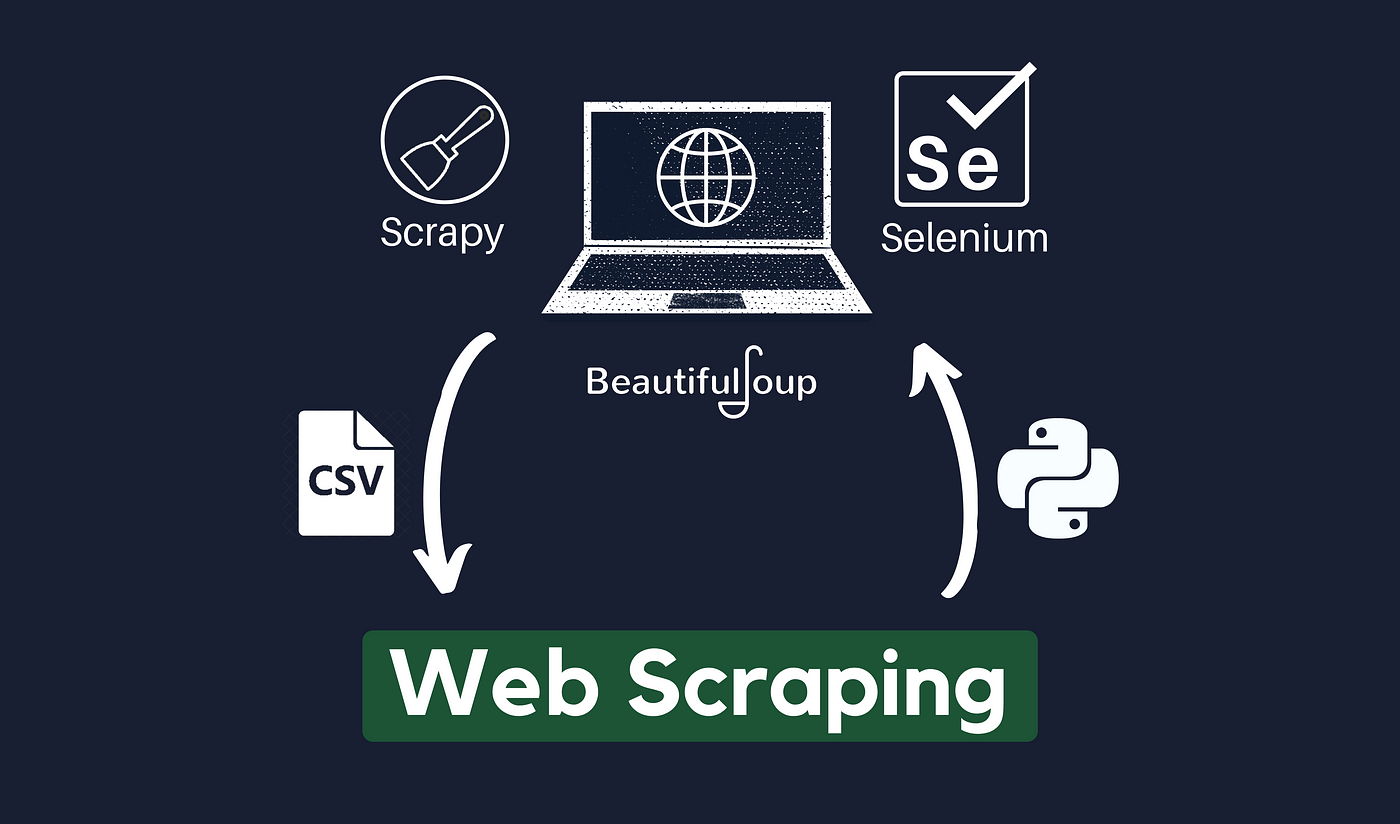

Web scraping is an automated technique used to extract data from websites. Using specialized software or programming methods, web scraping enables the data collection from web pages, converting it into a structured format for further analysis and interpretation.

Its popularity stems from its ability to gather large volumes of data from diverse sources efficiently. This article will look at the fundamentals of data scraping, including the techniques used.

Reason Why the Whole World is Behind Data?

The fascination with data on a global scale stems from its incredible potential to drive transformation. Data empowers businesses by providing the necessary information to make well-informed decisions, gain valuable insights, and identify important trends. Its applications are limitless, from conducting market research and competitive analysis to comparing prices and tracking sentiment. Researchers heavily rely on data to discover new insights, validate their hypotheses, and push the boundaries of scientific knowledge.

As a result, the pursuit of data is relentless worldwide, and data scraping plays a crucial role in this pursuit. Data scraping opens the door to a vast pool of data that fuels analysis, research, and the development of data-driven solutions by extracting valuable information from websites. It empowers individuals, businesses, and researchers to stay at the forefront of their respective fields by harnessing the power of data and leveraging its potential.

What is Data Scraping? Is Data Scraping Legal?

Data scraping, or web scraping, is a vital technique developers use to automate data extraction from websites. It includes programmatically recovering and parsing HTML or XML code to extract specific information, analysis, storage, or further processing. Scraping scripts are created by developers using various tools and coding languages to traverse through web pages, locate relevant data pieces, and extract them in an organized way.

This extracted data can then be utilized for various applications, including research, lead generation, price comparison, content aggregation, and monitoring. Data scraping/Web scraping empowers developers to efficiently collect and leverage data from the Web, enabling them to create innovative solutions and drive data-driven decision-making.

Moreover, the legality of data scraping depends on factors such as jurisdiction and the purpose of scraping. It is important to review and comply with a website’s Terms of Service. Additionally, scraping copyrighted content can raise legal concerns.

Is Data Scraping Similar to Data Crawling?

Crawling is the process undertaken by large search engines like Google, where their robot crawlers (such as Googlebot) explore the internet to index web content. On the other hand, scraping is focused on extracting data from a specific website.

Here are three key differences in the behavior of scraper bots compared to web crawler bots:

- Pretending to be web browsers: Scraper bots often mimic web browsers to deceive websites, while crawler bots indicate their purpose and do not attempt to misrepresent themselves.

- Advanced actions: Scrapers may perform advanced actions like filling out forms or engaging in specific behaviors to access certain parts of a website. In contrast, crawlers typically do not engage in such actions and simply crawl and index web pages.

- Ignoring robots.txt: Scrapers usually disregard the instructions specified in the robots.txt file, which is intended to guide web crawlers on which data to parse and which areas of the site to avoid. Since scrapers are designed to extract specific content, they may ignore directives intended to exclude certain data from being scraped.

Three Steps in Scraping Website Data

Web scraping involves a three-step process, which can be conceptually simple yet technically intricate:

- The scraper bot, implemented as code, initiates an HTTP GET request to a targeted website, retrieving the desired information.

- Upon receiving a response from the website, the scraper analyzes the HTML document, carefully extracting the required data based on a predefined pattern.

- Finally, the extracted data transforms and is converted into the intended format as designed by the author of the scraper bot.

What Is the Prime Goal of Data Scrapping?

Scraper bots serve various purposes, including:

- Content scraping: These bots extract content from websites, enabling the replication of unique features or advantages of a specific product or service. For instance, a competitor may scrape review content from platforms like Yelp and reproduce it on their site, falsely presenting it as original content.

- Price scraping: Competitors employ scraper bots to gather pricing data, allowing them to aggregate information about their rivals. This enables them to formulate competitive strategies and gain a unique advantage in the market.

- Contact scraping: Scraper bots can extract contact details from websites, such as e-mail addresses and phone numbers. This information can be used to create bulk mailing lists, conduct robocalls, or engage in malicious social engineering attempts. Spammers and scammers often rely on contact scraping to find new targets for their activities.

How to Do Data Scraping: Top Data Scraping Techniques

So how to scrape data from a website?

Understanding various data scraping techniques can significantly enhance your ability to extract data from a website. Using the following scraping techniques, you can effectively retrieve data from websites, empowering you to create robust and data-driven applications.

Here are some commonly used techniques for data scraping:

HTML Parsing: With this data scraping technology, the HTML structure of a web page is parsed using tools like Python’s BeautifulSoup or JavaScript’s Cheerio. You can explore the parsed HTML tree to find specific elements, such as tags, classes, or IDs, and extract the appropriate data.

XPath Queries: A language called XPath is used to pick elements from an XML or HTML document. Using XPath expressions, you can accurately target and extract data from particular points inside the document structure. Libraries like lxml in Python and XPath.js in JavaScript offer convenient XPath querying capabilities.

CSS Selectors: Like XPath, CSS selectors let you focus on particular HTML elements by class name, ID, or element type. You can use libraries like PyQuery in Python or jQuery in JavaScript to utilize CSS selectors for data extraction.

API Scraping: For accessing the data, some websites offer APIs. In these circumstances, you can communicate directly with the API endpoints by submitting queries and requesting data in a structured format, such as JSON or XML. For data extraction, this strategy is frequently more dependable and effective.

Headless Browsers: Selenium and Puppeteer are examples of headless browsers that mimic the actions of real web browsers. They make it possible to interact with websites by running JavaScript, pressing buttons, and completing forms. This method is suitable for data scraping from dynamic websites relying on JavaScript.

Rate Limiting and Proxies: Implementing strategies like rate restriction (regulating the frequency of requests) and proxy Scraper (changing between several IP addresses) is crucial to prevent being blacklisted or restricted by websites. This promotes a dignified scraping procedure.

That’s all about how to pull data from a website!

How to Mitigate Web Scraping?

To mitigate web scraping, developers can significantly reduce the effectiveness of web scraping and protect their websites and data from unauthorized access and misuse.

Let’s have a look at these helpful strategies:

Implement rate limiting: Limit the number of requests per IP address or impose rate limitations on API endpoints to stop excessive scraping.

Use CAPTCHA or reCAPTCHA: Integrate CAPTCHA or reCAPTCHA challenges to distinguish between human users and bots in critical areas of your website.

User-agent verification: Incoming requests should have their user agents checked to spot any odd or suspicious patterns. Block requests using user agents that are erroneous or suspicious.

IP blocking: Incoming requests should be watched, and IP addresses that make repeated requests or access restricted sections should be blocked.

Implement session management: Demand that users log in or use authentication tokens. Keep an eye on session activity and stop shady sessions.

Obfuscate HTML structure: Use arbitrary class or ID identifiers to alter the HTML structure of online pages, making it more difficult for data scrapers to find and extract. Use a content delivery network (CDN) to spread website material over several servers, making it more difficult for scrapers to focus on a single server.

Use JavaScript challenges: Implement JavaScript obstacles that prevent easy scraping methods by requiring client-side scripts to be executed to render or interact with page content.

Monitor access logs: Examine server access logs frequently to look for odd patterns, frequent queries, or traffic spikes that might point to scraping activity.

Legal measures: Web scraping should be explicitly prohibited in terms of service, and where necessary, malicious scrapers should be dealt with legally.

Top Data Scraping Tools in Market

#1. Zenscrape

Bright Data, Apify, ParseHub, and many more, the market is flooded with a plethora of options when it comes to finalizing the best data-scrapping tools. Where they all come with their own exceptional features and restrictions, Zenscrape’s unparalleled flexibility and advanced technology help developers to scrape huge data in seconds, making all the other options a cloud of dust!

It offers a range of features and functionalities that make it a popular choice among data enthusiasts. Some key features of Zenscrape include:

- Ease of Use: Zenscrape provides a user-friendly interface that simplifies the data scraping process, allowing users to extract data effortlessly.

- Web Scraping APIs: Zenscrape offers robust APIs that enable seamless integration with various programming languages, making it convenient for developers.

- Proxy Support: The tool supports proxy rotation, ensuring efficient and anonymous data scraping without IP blocking or restrictions.

- Advanced Scraping Capabilities: Zenscrape supports dynamic content rendering, JavaScript execution, and handling of CAPTCHAs, enabling the scraping of complex websites.

- Data Quality and Reliability: Zenscrape ensures high-quality and accurate data extraction, providing reliable results for various scraping needs.

With its comprehensive features, Zenscrape proves to be a reliable and efficient tool for data scraping, catering to the requirements of businesses, researchers, and developers alike. However, depending on the size and requirements of your business, there are other options you can rely upon to save your money!

#2. Zenserp

Zenserp is a data scraping tool that specializes in retrieving search engine result pages (SERPs) data. It allows users to extract valuable information from search engines such as Google, Bing, and Yahoo.

Here are some key features of Zenserp:

- Supports various search engines, including Google, Bing, and Yahoo.

- Provides access to structured data like organic search results, featured snippets, related searches, and more.

- Offers options to scrape data at scale with high performance and accuracy.

- Enables integration with other tools and platforms through APIs.

- Provides comprehensive documentation and customer support.

Pricing: Zenserp offers a range of pricing plans starting from $49/month. They also provide a free trial with limited access to their features.

#3. Bright Data (formerly Luminati)

Bright Data is a powerful data collection platform that offers comprehensive scraping solutions. It provides a wide range of scraping capabilities and advanced features for various use cases.

Here are some notable features of Bright Data:

- Offers a global proxy network with millions of residential IPs for anonymous and reliable scraping.

- Supports data extraction from websites, search engines, e-commerce platforms, social media, and more.

- Provides advanced data manipulation and filtering options.

- Offers browser automation tools for dynamic website scraping.

- Provides detailed analytics and monitoring to track scraping performance.

Pricing: Bright Data offers customized pricing based on specific requirements. They do not offer a free plan but provide a free trial with limited access to their services.

Please note that pricing details and free plan availability might change over time, so it’s recommended to visit the respective websites for the most up-to-date information.

Final Verdict

In today’s era of data dominance, web scraping has emerged as an indispensable technique, acting as a digital key to unlocking valuable insights from websites. By automating data extraction, web scraping saves precious time and effort and opens doors to a vast repository of information that may otherwise remain hidden behind APIs. This powerful method empowers developers to pioneer innovative solutions, make informed choices, and embark on data-driven journeys.

FAQs

What Is Data Scraping?

Data scraping, or web scraping, is an automated technique to programmatically extract specific information from websites by parsing HTML or XML code. It’s used for research, lead generation, and content aggregation.

Why Is Web Scraping Important?

Web scraping unlocks inaccessible information, automates data collection, and accelerates research, analysis, and solution-building. It empowers developers with invaluable insights, personalized recommendations, and machine-learning capabilities.

How Can Web Scraping Be Mitigated?

To counter web scraping, employ rate limiting, CAPTCHA challenges, user-agent verification, IP blocking, session management, HTML obfuscation, JavaScript challenges, access log monitoring, and legal measures. Safeguard websites and data from unauthorized scraping.

⚠ Article Disclaimer

The above article is sponsored content any opinions expressed in this article are those of the author and not necessarily reflect the views of CTN News