The use of Markov cohort models for analysis and prediction in medical applications was described as early as 1983 by Beck and Pauker.

This work became the basis for many subsequent publications on using Markov models in decision analysis.

These, in turn, have stimulated the development of software that helps simplify the construction and evaluation of Markov models for economic modeling of health care, including cost-effectiveness modeling https://digitalho.com/cost-effectiveness-modelling/.

In this article, we will give a brief overview of Markov process theory and describe how Markov models are used in healthcare decision-making.

What is a Markov Process

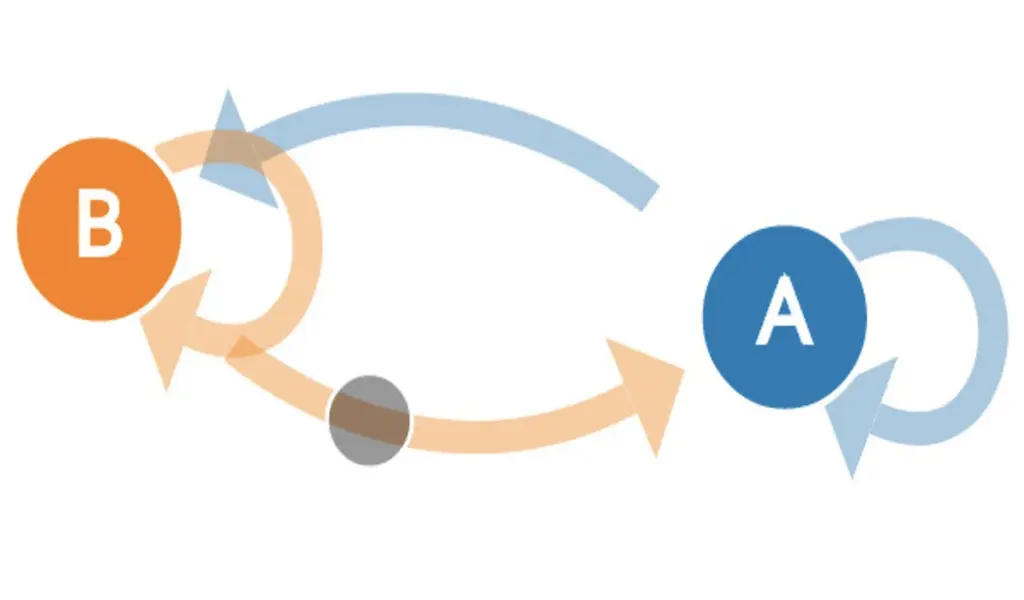

In probability theory, a Markov process, or Markov chain, is a random process whose evolution after any given value of a time parameter is independent of the evolution preceding it, provided that the value of the process at that moment is fixed.

In other words, when the “future” of the process depends on the “past” only through the current state of affairs.

The property that defines a Markovian process is commonly referred to as the Markovian property.

Andrei Markov first formulated it. In his works, this scientist pioneered the study of sequences of dependent trials and associated sums of random variables.

Markov chains have found wide practical application.

They are used to create statistical models of real-world processes in areas ranging from thermodynamics and chemistry to finance and health economics.

The tools of matrix algebra, cohort modeling (one trial, multiple subjects), or Monte Carlo simulation are used to evaluate Markov models.

Markov Models in Medical Decision Making

Markov models are most often used in cases such as:

- when decision-making is complicated by the risk that is continuous in time;

- when the timing of events is important;

- when important events may occur more than once.

Markov models are capable of describing recurring events as well as temporal dependencies of probabilities and utility.

Because of this ability, Markov models represent clinical conditions as accurately as possible and simulate patient prognosis under different treatment strategies.

In contrast to representing such clinical conditions with other decision trees, Markov trees do not require unreasonable simplifying assumptions.

According to the Markov model, a patient is in one of the discrete health states at any given time.

For example, in analyzing the possible outcomes of a surgical procedure, the nodes of the tree might be events:

- different outcomes of the surgical treatment itself;

- complications;

- death.

This means that the outcomes chosen as the final nodes of the tree may not be final outcomes (except death).

They simply represent convenient points for analysis and subsequent prognosis. In this case, all events are represented as transitions from one state to another, and are time-limited.

When evaluating a Markov process, the analyst can calculate the average of the number of cycles (or average time) spent by the patient in each state.

Thus, by adding up all the average times for each individual condition, the average survival time after a medical intervention can be calculated.

Numerical tools for evaluating Markov models

Analysis using Markov models allows you to:

- choose the best treatment regimen for the patient with the least amount of consequences;

- evaluate the effectiveness of a particular drug or procedure;

- calculate the cost-effectiveness of using a drug.

Today, many companies offer the development of convenient digital applications for the automatic evaluation and analysis of Markov models. Among their advantages are accuracy, speed of obtaining the necessary data, ease of use and visualization of the results.

Related CTN News:

How Does Dropshipping Work? The Ultimate Guide

Why Divorce is Becoming More Common in Marriage

Clever Blog Post Ideas To Attract Your Audience in 2022

⚠ Article Disclaimer

The above article is sponsored content any opinions expressed in this article are those of the author and not necessarily reflect the views of CTN News